Blog

How big or small should a (micro)service be?

4 minute read

4 minute read

20.04.2020

A question as controversial as to whether eggs are good for you. And just as we have seen tides turn when it comes to eggs, the same thing is happening these days with services.

People got bitten by tangled, tightly-coupled monoliths that caused slow releases of buggy software followed by long stabilization periods. This became unacceptable in a new customer-oriented, innovation-driven world. If tightly-coupled, monolithic architectures are bad then something opposite should be good. Thus, modular and loosely-coupled architectures came along. This kind of architecture made things better indeed – modularization made systems more maintainable and teams could prototype faster so the innovation cycle shortened.

Modularization made things better. And thinking in true Extreme Programming fashion(*) if moving from monolith to 5 services is good than moving from 5 services to 15 should be even better, right? Let’s try that!

Extreme Programming (XP) is a popular agile software development methodology that focuses on technical practices. Because some technical practices are considered “best practices” and “common sense”, it is clearly beneficial to use them. XP takes this argument to extremes: if using these practices is good, let’s use them all the time! For example, if code review is useful, let’s do it more (i.e. continuously)…and voila we just got Pair Programming – one of the XP technical practices. If writing tests is good, let’s do it more (i.e. continuously)…and there it is – Test-Driven Development as an XP practice.

So we as an industry have started competing in slicing our systems as thin as we can. Very soon organizations ended up with hundreds and thousands of services. This, in turn, generated a host of other problems associated with distributed systems like monitoring, logging, tracing, observability in general in a distributed environment, security, standardization, governance, etc.

It seems that too thin doesn’t work either.

Gergely Orosz from Uber commented recently that too thin services are overkill even for such a distributed organization. His team is now moving to something called “macroservices”.

For the record, at Uber, we’re moving many of our microservices to what @copyconstruct calls macroservices (wells-sized services).

Exactly b/c testing and maintaining thousands of microservices is not only hard – it can cause more trouble long-term than it solves the short-term. https://t.co/VL8opOh1BY

— Gergely Orosz (@GergelyOrosz) April 6, 2020

The term itself is irrelevant here. What matters is that too thin is as bad as too monolithic. Gergely gave a more detailed explanation here.

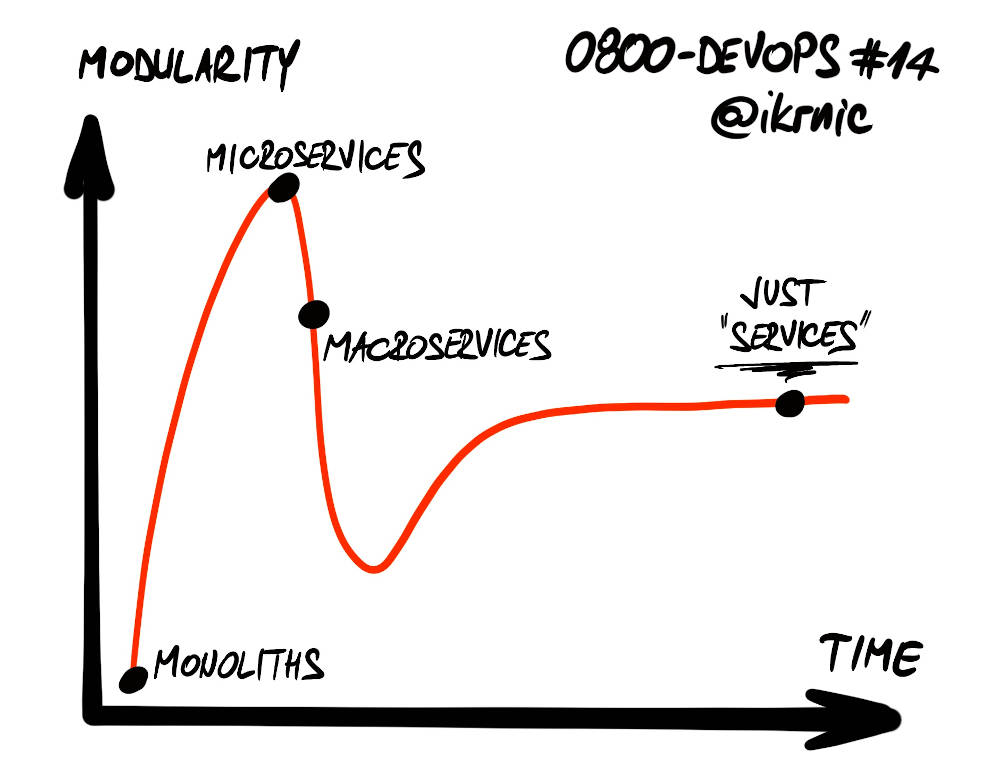

After hitting both extremes (and better understanding their business and their systems along the way) organizations are now converging to optimal service sizes. What is happening now can be nicely described with a slightly modified Gartner’s Hype cycle diagram.

How big should your services be? Nobody can give you a better answer than yourself. But there are some guidelines:

- Align your services with the business domain and not technical capabilities (use Domain-Driven Design and think in terms of Bounded Contexts)

- Gain more insights into your business domain with techniques such as Event Storming

- Make peace with the fact that you won’t get it right on the first try

- Therefore, embrace Evolutionary Architecture principles to make your systems more flexible (keep your options open, use the Last Responsible Moment strategy)

- And a practical advice borrowed from woodworking: when in doubt to slice or not, leave it in one piece. You will have a chance later to slice it if necessary

Interviews, articles, book recommendations and all things DevOps! Once a month, straight to your inbox!

4 minute read

4 minute read