TL;DR

To install IBM API Connect 2018 on your local machine do the following:

- Clone ibm-api-connect repository from Github

- Copy API Connect installation files into

local-install-apicv2018/apicfoldercd local-install-apicv2018vagrant upvagrant sshcd /vagrant/scriptsmake loadGWmake prepmake work

As someone who is working with IBM API Connect for the last few years, I often need to access isolated APIC environment. Be it for demo purposes or to test new functionalities. With new microservice architecture, installing API Connect 2018 can be challenging.

The first limiting factor is the ability to meet the hardware requirements. Looking at the current maintenance level of APIC: 2018.4.1.9, current minimum hardware requirements are 12 cores of CPU power and 48GB of RAM. On top of that 532GB of disk space is required. Not something that you can easily found in a typical desktop environment. If we manage to lower this requirements to let’s say 8 CPU cores, 24GB RAM and 200GB of disk space, installing APIC locally becomes possible.

But how to get there? We might choose not to install some components, for instance, Developer Portal. But, more often then not, we want to have the whole environment available. So what can we do instead? As we can see in the analysis below installing all components on the same node has some benefits. Resources requested are, for the majority of components, less than the required minimum. With this, we can get close to 8 cores and 24GB of RAM. In the installation, Gateway was limited further to enable all the pods to run. Again, this is not something that you would do for your production or even test environment, but for demo, it should suffice.

OK, now that we know how local installation can be achieved let’s see how to do this.

Watch the video tutorial or keep on reading. Good luck!

Gathering API Connect Installation Files

Installation files are available from a Fix Central. The current maintenance level of APIC is available at IBMs Fix Packs Available for IBM API Connect v2018.x page. This installation has been tested with v2018.4.1.9-ifix1.0 but newer versions should work as well.

The following installation files are needed:

analytics-images-kubernetes_lts_v2018.4.1.9-ifix1.0.tgz

apicup-linux_lts_v2018.4.1.9-ifix1.0

dpm2018419.lts.tar.gz

idg_dk2018419.lts.nonprod.tar.gz

management-images-kubernetes_lts_v2018.4.1.9-ifix1.0.tgz

portal-images-kubernetes_lts_v2018.4.1.9-ifix1.0.tgzAll installation files size is 4.2 gigabytes.

Installing API Connect

To install API Connect, first, we need a Linux machine. To me, using Vagrant is the best way to get to the Linux VM. Vagrant machines have minimal disk footprint. To run Kubernetes we only need Linux kernel, not the Desktop UI. I’ve chosen bento/ubuntu-16.04 as an image which is built by the Chef Bento project. You will need a desktop machine with at least 32GB of RAM and 8 virtual cores. In my case, it is a Lenovo laptop with Intel(R) Core(TM) i7-7700HQ CPU @ 2.80GHz, 2808 Mhz, 4 Core(s), 8 Logical Processor(s) processor.

IP of the machine will be set to 10.0.0.100 and machine hostname is apic.

Bootstrapping the Vagrant Machine

After cloning the ibm-api-connect repository change directory to local-install-apicv2018.You will find Vagrantfile file there. Presuming you have working Vagrant installation, you could just run vagrant up command.

Vagrant up command will

… creates and configures guest machines according to your Vagrantfile.

This is the single most important command in Vagrant, since it is how any Vagrant machine is created.

Anyone using Vagrant must use this command on a day-to-day basis.

Inspecting Vagrantfile we can see that in provisioning VM there are scripts to perform the following:

disable_swap– Kubernetes will not run if a swap is turned on. So we do need to turn it off.set_max_map_count– For Analytics component to run we need this parameter tuned.change_time_zone– Will set timezone toEurope/Zagreb. You are welcome to tune it inbootstrap-timezone.sh.enable_ntp– It is a good practice to have NTP enabled.docker– Self-explanatory. InstallsDocker.kubectl– This step installs more thankubectlcommand. It will installkubeadmtool which will be used to create new k8s cluster. Take a look intobootstrap-kubelete-kubectl-kubeadm.shfor more details.helm– Installs Helm that is required for APIC installation.jq– Nice tool to have if in need to parse JSON structures.bashrc– Bash functions for an easier way to access Dashboard and DP UI.apicup– Installsapicuptool that will be used to set up new APIC installation.MailHog– Fake e-mail server that will be used to accept e-mails when creating new Portal and other resources.

After vagrant up finishes, you should be able to login into the VM.

Accessing the Machine via SSH

You can just type vagrant ssh and this will get you into VM.

Usually, I prefer to use a stand-alone tool for SSH access. To make it work, use the following information:

IP: 10.0.0.100

username: vagrant

private key: .vagrantmachinesapicvirtualboxprivate_keyPreparing for Install

After VM is provisioned it has all the tools but nothing is yet active. So we need to provision Kubernetes environment, Helm with Tiller and install ingress and Docker registry. After this, as the last step, we will use apicup tool to upload APIC images into the k8s Docker registry.

For this, we will use the granddaddy of build tools; good old Makefile. Makefile is located at /vagrant/script/Makefile in the VM. Original author of Makefile installation is Chris Phillips. It can be found at GitHub.

Looking in the file you can see that tasks are divided into two phases. First is prep: prep: k8s helm ingress storage registry upload.

Second is the work phase: work: checkReady smtp buildYaml deploy configureAPIC dashboard.

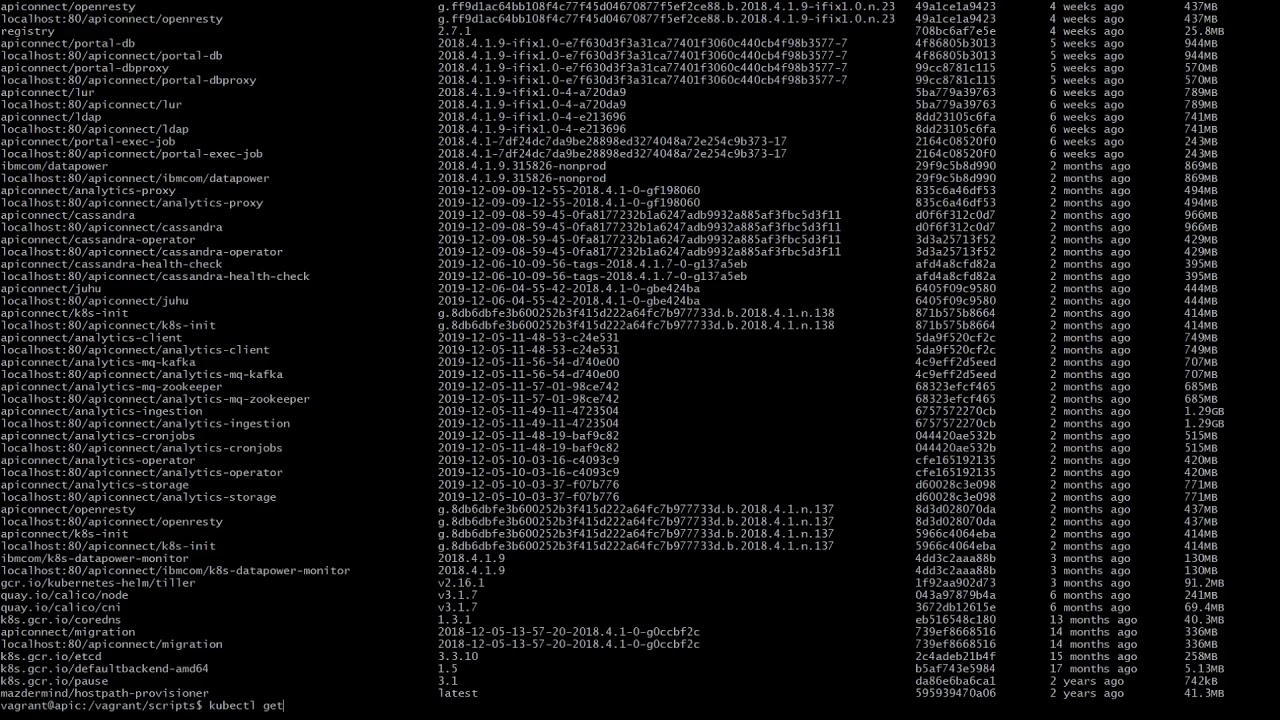

Loading DataPower Image Into Docker Registry

Before we run the prepare phase, we need to run loading gateways in the local repository. Run make loadGW. This should load images of DataPower gateway and DP monitor into local Docker registry and set tags for Gateway and monitor into envfile. If you tail envfile it should contain something like:

export GTW_IMAGE_TAG=2018.4.1.9.315826-nonprod

export DPM_IMAGE_TAG=2018.4.1.9Running The Preparation Phase

With those images loaded, we can run make prep. This command will:

prep– Install Kuberneteshelm– Install Tiller on the k8s serveringress– Install nginx ingressstorage– Set local k8s StorageClass with local hostpath provisionerregistry– Install Docker registry on the k8supload– Usingapicuptool uploads APIC 2018 images from *.tgz files into the Docker registry

This phase takes about 20 minutes to finish.

Installing APIC into Kubernetes

Now after everything is prepared we can finally install API Connect into k8s. Install is done with the help of apicup tool. This tool makes use of Helm to install the APIC charts into k8s. At this point, we just need to run make work. Installation takes about 15 minutes. Installation will also create default APIC topology and set a default password for the admin user. This can be changed prior to the installation in the envfile file.

Accessing the Environment

Installed APIC environment uses nip.io service so we can map DNS hostname to VMs IP address. To access Cloud Management console open https://cloud.10.0.0.100.nip.io in your browser. That is if VMs IP is set to 10.0.0.100. All the endpoints can be found in envfile.

Accessing the k8s Dashboard

To open the k8s Dashboard, in the VM terminal write dashboard. Further steps given by the output of the command. As Vagrant VM forwards 8001 to the localhost, it is possible to open the link below on host machine.

vagrant@apic:~$ dashboard

Access Dashboard by opening http://localhost:8001/api/v1/namespaces/kubernetes-dashboard/services/https:kubernetes-dashboard:/proxy and login using token:

eyJhbGciOiJSUzI1NiIsImtpZCI6IiJ9.eyJpc3MiOiJrdWJlcm5ldGVzL3NlcnZpY2VhY2NvdW50Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9uYW1lc3BhY2UiOiJrdWJlLXN5c3RlbSIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VjcmV0Lm5hbWUiOiJhZG1pbi11c2VyLXRva2VuLWNscHNrIiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9zZXJ2aWNlLWFjY291bnQubmFtZSI6ImFkbWluLXVzZXIiLCJrdWJlcm5ldGVzLmlvL3NlcnZpY2VhY2NvdW50L3NlcnZpY2UtYWNjb3VudC51aWQiOiIwMmQxMDJlNi1lNTdhLTRmNmItYTYyYy0wNzhjYTlkZjZiMGIiLCJzdWIiOiJzeXN0ZW06c2VydmljZWFjY291bnQ6a3ViZS1zeXN0ZW06YWRtaW4tdXNlciJ9.A7XBP3hqh8UcXXfqcJZdQuhv5Mc4GW1dnki337cfo-hqCjcI4YF6H-qocl-O7kNR6wMboMafs_wGx_vefxFkJWPXIP1nFTWAPlbrn59vHgHAtIfJVrnWu4Q4yk6Tc0BerwZQrAn8nS1Veqg0heegg9E2Dt9MjMbX9THge0F6lYW73bFoWsWmLcOpwLfMqjrQaY6_QDOraELCLsBAliAOt25IrbDeYJYriaToJTyJt-jv_2GCow-AueI_-ORRvKeZH-KE6fL69hxDfDu6YNgpWUpS4UYtp4zJeb9afgtTPFIWEmIxAzUBtbE7x6VlRju8j7qtParRowUanNXzjsjx8g

Starting to serve on [::]:8001Accessing DataPower Console GUI

To expose DataPower GUI you need the forward port from VM. It is a little bit more complicated then exposing Dashboard as two steps are needed.

First, run the command dpui:

vagrant@apic:~$ dpui

Run in ssh following: 'socat tcp-listen:9090,bind=10.0.0.100,reuseaddr,fork tcp:localhost:9090'

Forwarding from 127.0.0.1:9090 -> 9090

Forwarding from [::1]:9090 -> 9090In another terminal run the following:

socat tcp-listen:9090,bind=10.0.0.100,reuseaddr,fork tcp:localhost:9090With the command above running, on the host machine, open https://10.0.0.100:9090/dp/index.html and login with admin/admin username and password.

Local API Connect v2018.4.1.9-ifix.1.0 Environment Analysis

Below is a table with APIC pods running in the local Kubernetes environment.

| Name | CPU Requests | CPU Limits | Memory Requests | Memory Limits | AGE |

|---|---|---|---|---|---|

| r307b84ffe1-analytics-client-5644b87874-pfws4 | 300m (3%) | 0 (0%) | 256Mi (1%) | 0 (0%) | 7d5h |

| r307b84ffe1-analytics-ingestion-7ffbcb64d8-9kznk | 400m (5%) | 0 (0%) | 1000Mi (4%) | 0 (0%) | 7d5h |

| r307b84ffe1-analytics-mtls-gw-59c85d545-9zgbn | 100m (1%) | 0 (0%) | 128Mi (0%) | 0 (0%) | 7d5h |

| r307b84ffe1-analytics-operator-7567776976-sdxpc | 100m (1%) | 100m (1%) | 128Mi (0%) | 128Mi (0%) | 7d5h |

| r307b84ffe1-analytics-storage-coordinating-5465bd7b94-pn88r | 100m (1%) | 1 (12%) | 4608Mi (19%) | 6Gi (25%) | 7d5h |

| r307b84ffe1-analytics-storage-data-0 | 100m (1%) | 1 (12%) | 6Gi (25%) | 8Gi (34%) | 7d5h |

| r307b84ffe1-analytics-storage-master-0 | 100m (1%) | 1 (12%) | 4608Mi (19%) | 6Gi (25%) | 7d5h |

| r3a42842260-cassandra-operator-7f4b668dff-7nrf6 | 100m (1%) | 100m (1%) | 256Mi (1%) | 256Mi (1%) | 7d5h |

| r5673b1bbde-datapower-monitor-5d6c5797c4-2pwwl | 250m (3%) | 500m (6%) | 256Mi (1%) | 1Gi (4%) | 7d5h |

| r5673b1bbde-dynamic-gateway-service-0 | 2 (25%) | 4 (50%) | 1Gi (4%) | 8Gi (34%) | 7d5h |

| r8a4435c9ec-analytics-proxy-965574746-x9t87 | 100m (1%) | 0 (0%) | 128Mi (0%) | 0 (0%) | 7d5h |

| r8a4435c9ec-apiconnect-cc-0 | 250m (3%) | 0 (0%) | 1925Mi (8%) | 4Gi (17%) | 7d5h |

| r8a4435c9ec-apim-v2-6449c469f8-f866t | 300m (3%) | 0 (0%) | 128Mi (0%) | 0 (0%) | 7d5h |

| r8a4435c9ec-client-dl-srv-6cf94576fd-qhp6p | 0 (0%) | 0 (0%) | 8Mi (0%) | 0 (0%) | 7d5h |

| r8a4435c9ec-juhu-5dcfd4fcd9-jhgfv | 100m (1%) | 0 (0%) | 192Mi (0%) | 0 (0%) | 7d5h |

| r8a4435c9ec-ldap-d68c8dbcb-p4z4j | 100m (1%) | 0 (0%) | 64Mi (0%) | 0 (0%) | 7d5h |

| r8a4435c9ec-lur-v2-754999b57f-glh57 | 100m (1%) | 0 (0%) | 128Mi (0%) | 0 (0%) | 7d5h |

| r8a4435c9ec-ui-7ddc4c84d9-4cgdw | 100m (1%) | 0 (0%) | 8Mi (0%) | 0 (0%) | 7d5h |

| rbcb357bd8b-apic-portal-db-0 | 400m (5%) | 0 (0%) | 640Mi (2%) | 0 (0%) | 7d5h |

| rbcb357bd8b-apic-portal-nginx-658f55d8ff-6sdx9 | 75m (0%) | 0 (0%) | 512Mi (2%) | 0 (0%) | 7d5h |

| rbcb357bd8b-apic-portal-www-0 | 500m (6%) | 0 (0%) | 1Gi (4%) | 0 (0%) | 7d5h |

We can aggregate consumption by the APIC components; management, analytics, portal and gateway.

We can see that pod names start with some id. This id is the id of the Helm deployment. We can use ids to get the requirements for CPU and memory.

Ids are

| Management | Analytics | Portal | Gateway |

|---|---|---|---|

| r8a4435c9ec and r3a42842260 | r307b84ffe1 | rbcb357bd8b | r5673b1bbde |

To get total for CPU request we can use command like kc describe node apic | grep 'r8a4435c9ec|r3a42842260' | awk '{ print $3+0}' | paste -sd+ - | bc.

| Component | CPU Requests | Memory Requests |

|---|---|---|

| Management | 1150m | 2837 Mi |

| Analytics | 1200m | 16872 Mi |

| Portal | 975m | 2176 Mi |

| Gateway | 2250m | 1280 Mi |

If we aggregate again we get 5575m of CPU Requests and 23165 of memory requests.

Looking at the consumption at the node level we will see something like

| Resource | Requests | Limits |

|---|---|---|

| CPU | 6575m (82%) | 7700m (96%) |

| Memory | 23305Mi (97%) | 34516Mi (143%) |

Basic arithmetics will give us that other resources are taking 1 additional core and only 140 Mi memory. Also, we can notice that in the current setup we are at 97% with requested memory. If we lower available memory more, we are risking that pods will be unable to start.

To achieve this small footprint, one pod was downsized after the installation. That is IBM DataPower Gateway service; pod with id 5673b1bbde-dynamic-gateway-service-0. It has been downsized to 2 cores (from 4) and memory requests are decreased down from 6GB to just 1GB. As limits were left at 4 cores and 8 gigabytes, DataPower should continue to work. But, I would strongly suggest not doing this for any other environment where environment stability is required.

Photo by Mitul Grover on Unsplash